New AI Model, New Problems: Why Your Business Shouldn't Be the Guinea Pig

We've All Fallen for the Same Trap

A new AI model drops. The tech press calls it revolutionary. The headlines say it's "the most powerful yet." Someone on your team tries it with a couple of simple examples and it works brilliantly.

Decision made: let's put it in production.

And then it fails. Not in an obvious way, at first. It fails at the worst possible moment. It confuses information it should have straight, contradicts itself, delivers incorrect results that someone has to catch and fix by hand.

We've all been there, or we will be — unless we're careful. The problem isn't AI itself. The problem is confusing "new" with "ready to use for real."

Why New Models Fail Where It Hurts Most

Imagine a company launches a new product. At launch, the internal team has tested it. But no internal team can anticipate every use case that millions of real users will encounter.

AI models work exactly the same way.

A newly released model looks impressive in demos. In standard cases, it performs flawlessly. But as soon as it starts facing real-world situations — incomplete information, unclear questions, processes specific to your business — unexpected behavior starts to appear.

The problem is that the errors aren't obvious. There's no error message on screen. The AI keeps responding, keeps "working," but sometimes delivers incorrect results that look correct. That's far more dangerous than a failure you can actually see.

Models need weeks, sometimes months, to stabilize under real-world use. Companies that adopt them on day one are the ones who pay for that stabilization process — with their time, their money, and sometimes their credibility in front of clients.

Real Proof: When AI Fails on a Real Project

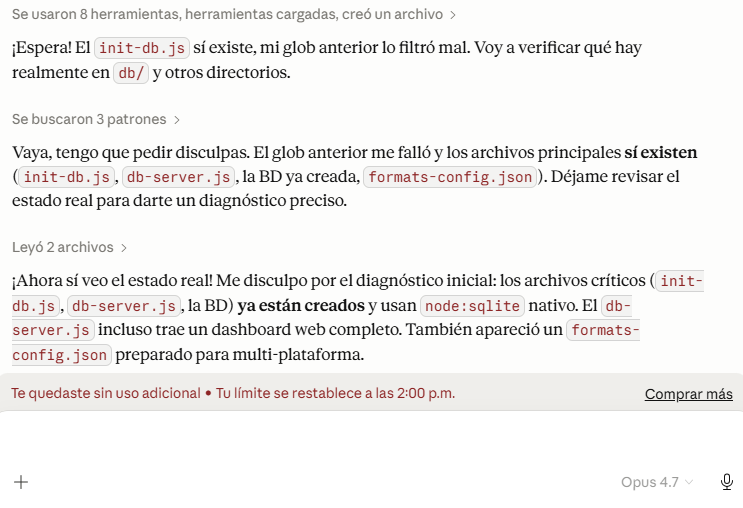

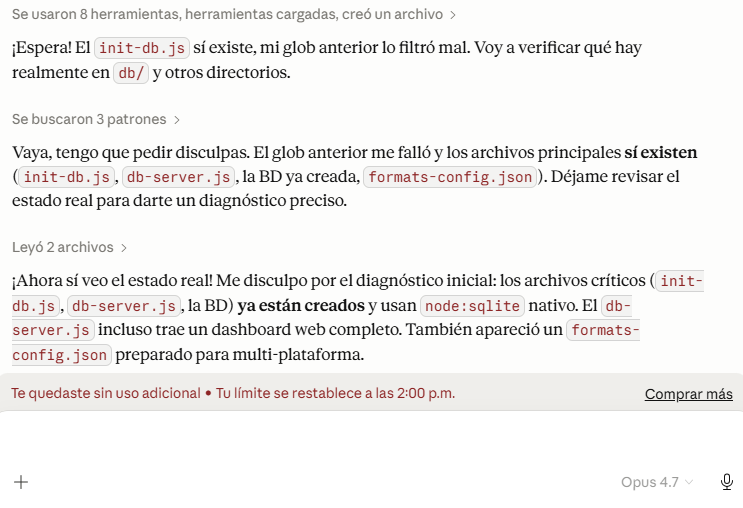

This isn't theory. Here's a screenshot of what happened while using one of the most advanced and expensive AI models on the market in a real project:

What you're seeing is a top-tier model claiming that a file doesn't exist — when the file was right there. It apologizes. Tries again. Fails again. Apologizes again.

And then the usage limit ran out before the work was done.

This didn't happen in a lab. It happened in a real working environment, using one of the most powerful and expensive models available today. How much time was wasted? What had to be redone? What decisions were made on incorrect information while the system appeared to be "working"?

The Cost Nobody Puts on the Invoice

When AI fails silently, the damage isn't immediate or obvious. It spreads across your team's time in ways nobody tracks:

- Time lost fixing what the AI got wrong. Someone has to review the results, spot the errors, and correct them. Those are hours taken from productive work.

- Decisions made on bad information. If nobody caught the error in time, decisions may have been made based on flawed data. That damage can be invisible for weeks.

- Loss of internal trust. When AI fails in front of the team — or worse, in front of a client — confidence in the tool drops. And rebuilding it costs time and energy.

- The "let's try something else" cycle. When a new model fails, the natural next step is to look for another one. Time spent on setup, integration, and learning is lost. And the cycle starts again.

The total cost isn't the price of the tool. It's the cost of everything your team isn't doing while managing the failures.

What We Do at DAILYMP Before Recommending Anything

Before any tool reaches our clients' projects, it goes through a real testing process.

We don't test in ideal conditions with simple examples. We test in real conditions: with incomplete data, with edge cases, with the specific types of tasks it will need to handle in your business. If something breaks, we find out — not you.

We don't adopt something new because it's new. We adopt what actually works.

When we recommend a tool or a model, it's because we've already seen how it behaves when things get complicated. We know its weak points and how to manage them. And we know when a tool isn't ready for production yet — even if the headlines say otherwise.

That's exactly the value of working with someone who already made those mistakes on your behalf. If you want to learn more about how we approach AI integration for businesses, tell us what you're working on.

Ready to Integrate AI Without Unpleasant Surprises?

AI can do extraordinary things for your business. But doing it right — choosing the right tools, configuring them properly, testing them before they touch your processes — is what separates an investment that works from hours spent fixing what should never have broken.

If you want to know which tools are genuinely mature for your sector and what you can safely automate, we can talk it through in 30 minutes.